WHAT'S NEW IN AR/XR?

From Snap’s bet on augmented reality to the teasing of the Google Glasses V3.0

By Immersiv’s Dev Lab • 3rd June 2022 • 6 min

This spring was certainly rich in announcements and some of the most expected conferences of the year took place. From Snap’s Partners summit – “Back to Reality” to Google I/O 2022, discover what’s new in the AR world.

Snap Partner summit 2022 : “Back to Reality”

With such an evocative title for its annual conference, Snap’s statement is clear: AR is part of our daily life now, and they intend to capitalize on it. Snap is no longer just a camera company, they sit among the leaders in the AR industry, and augmented reality has increasingly been a part of their business model. Just on Snapchat, they report 6 billion daily interactions with AR Lenses.

So, what were the big announcements regarding AR at the Partner Summit?

Besides the vast focus made on augmented shopping and AR-powered commerce, Snap tried hard to show how augmented reality can positively impact our world.

First things first, Snap gave some news about its Partner Program, the Spectacles ambassador program. This program allows selected creators to try on and experiment with Snap’s AR glasses – which are not available for sale. It has been running for a couple of years now and this event was the opportunity for Snap to show some of the work of the ambassadors. If you look carefully at the video below, you will see the experience we designed for the LA Rams in February!

AR connects us, and new features like VoiceML, 3D Hand Tracking, and Connected Lenses are allowing us to express ourselves more fully – further merging the physical and digital worlds in profound new ways. pic.twitter.com/rkVr3T39k7

— Spectacles (@Spectacles) April 28, 2022

In order to make AR always more accessible to business and brands, a new Augmented Reality 3D asset manager was announced. It will make it faster and easier for businesses to build and optimise 3D models for any product in their catalogue. How? by using a new computer vision-enabled solution that will also allow retailers who don’t have 3D models of their products to run virtual try-on using 2D images.

Snap’s Augmented Reality 3D Asset Manager Tool – courtesy of Snap

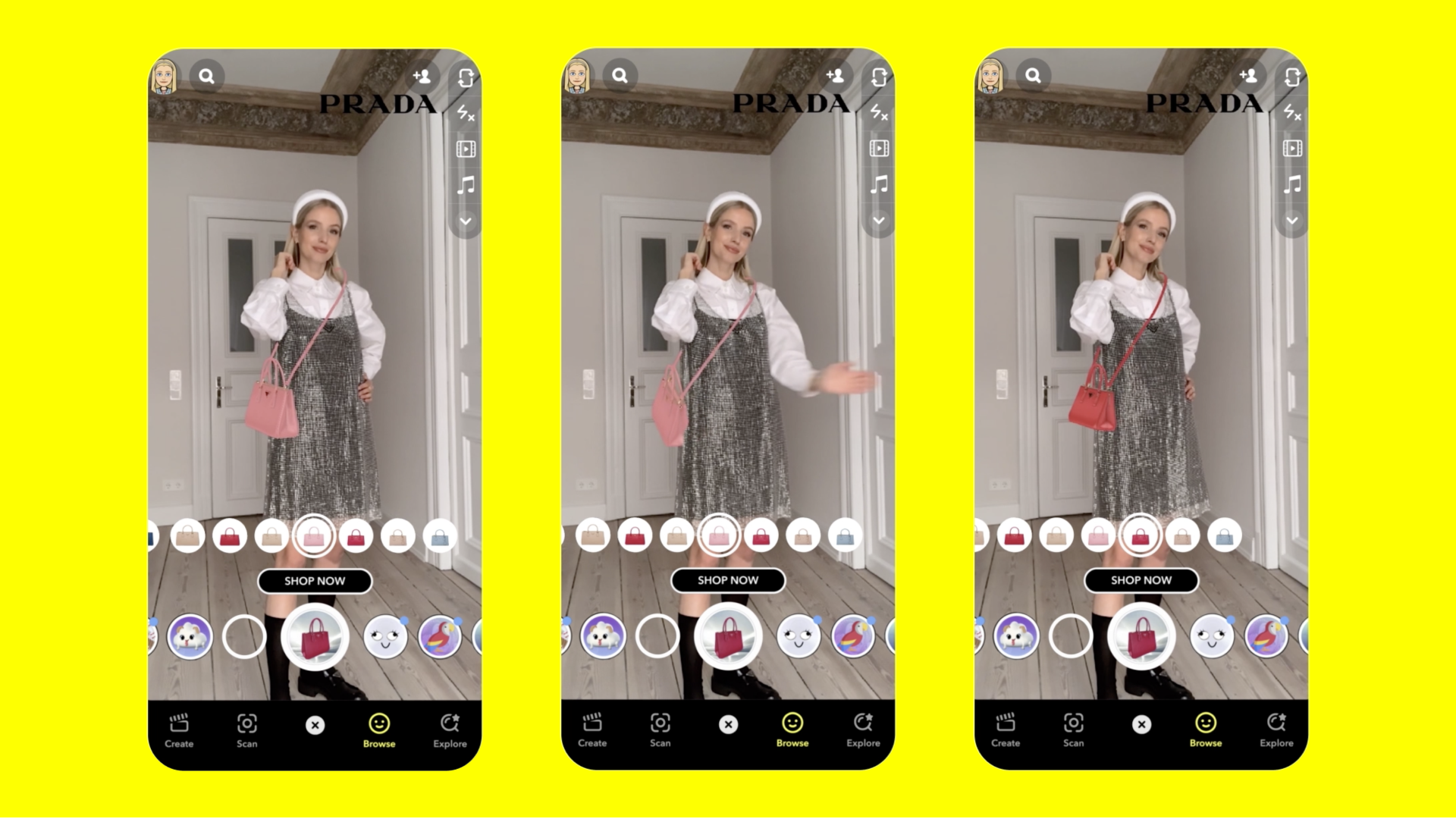

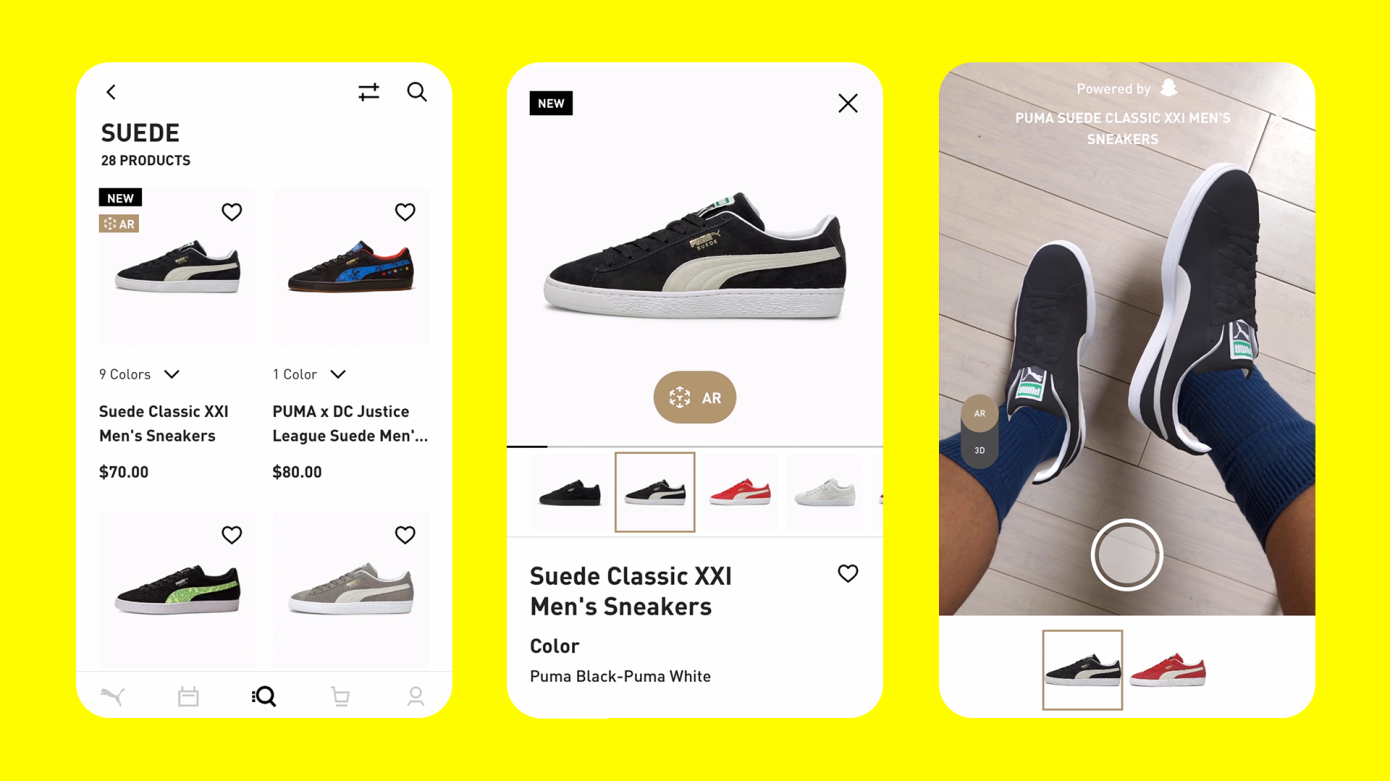

Speaking of virtual try-on: Camera Kit for AR Shopping, a specialized SDK launched during the Summit, on iOS and Android. A desktop version is coming soon. This will allow clothing retailers to utilize Snap’s AR shopping tools on their own apps and sites. All powered by Snap of course. As for now, Puma is one of their early partners using this technology.

Puma’s Augmented Website using Snap’s tech – courtesy of Snap

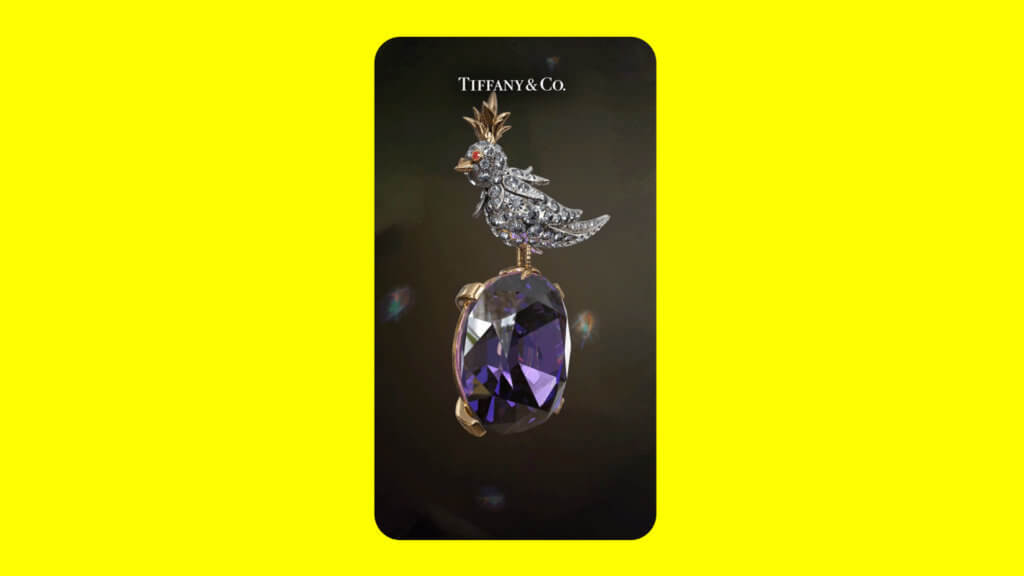

But if augmented shopping certainly was a huge focus of the conference, it doesn’t mean that Snap didn’t work on other aspects of the AR tech. There are also big changes coming to Lens Studio, Snap’s AR creation software, with machine learning-enabled environment matching and Ray Tracing, an advanced graphics capability.

A key use case presented during the conference: more realistic jewelry models for virtual try-on applications, with a Tiffany & Co. virtual model – courtesy of Snap

A new analytics package is arriving on Lens Studio that will allow developers to understand how users are interacting with their experiences during sessions.

A new collection of backend services called Lens Cloud was also announced. The collection consists of a storage service, location-based services, and multi-use experience services. While multi-user experiences and location-based Lenses have been teased by Snap for a while now, the cloud storage feature is promising in that it could allow persistence within experiences. No deadline was promised tho.

Because to spread AR conversion even faster, the more the merrier, this year’s conference was also rich in partnership announcements. Snap publicizes a multi-year partnership with Live Nation for launching AR-enabled immersive concerts and festivals. That looks promising for the entertainment world! We can only imagine what an AR-enabled immersive match in the stadium would look like… but wait… we already did it!

And we will end our recap of the Partners summit with one final partnership announcement: the Snap Camera is now available on the lock screen of the Google Pixel 6 so that Snapchatters don’t have to miss a moment unlocking their phones.

Google Pixel 6 new “Quick Tap to Snap” feature

Google I/O 2022: the comeback of the Google Glasses

While Google I/O has traditionally remained a software-focused event, they surprised us this year with some exciting hardware announcements as well. We won’t take too long on that subject as it is not the purpose of this article, but as expected Pixel 6a was announced of course, and it was coming with some exciting teasing on the Pixel 7 and 7pro! Other reveals included the pixel watch and the pixel buds pro.

Big news that will ravish Android developers: the second Android 13 beta is here, and this time non-Pixel phones are also invited to the party.

Now, let’s circle back to what really interests us here: AR.

Obviously, you already heard, if not experience Google Maps augmented reality feature the Live View – an AR-powered feature that lets you precisely navigate and understand your surroundings. Well from now on, third-party apps will be able to use this feature as well!

On the software side, ARCore, Google’s augmented reality platform, is getting the new Geospatial API, which uses Google Earth 3D models and the Street View database to let developers build location-based AR experiences. What is the difference from, for example, Apple’s location anchors?

Well with these anchors, you won’t have to be on-site to build them! (If you’re an AR developer yourself, you’ll get the excitement!)

But the biggest thrill Google gave us came from the teased comeback of the Google Glasses – if we can really call them that. A decade after the failure of the previous Google glasses, the firm seemingly took a totally different approach this time around. The issue with the original was that on the one hand that the hardware simply wasn’t ready. On the other hand, Google never really brought tangible and use cases for its glasses. For those reasons, it never made it too far beyond the developer community. But the wearable teased during the I/O conference is a real turnaround. Regarding the first matter, obviously, AR tech and hardware capabilities are not what they were 10 years ago. Now, for the purpose Google has set for these glasses, it couldn’t have a more noble objective than one of the company’s core missions: bringing knowledge to everyone, everywhere. Even if what we saw is just a demo video of a prototype version, it certainly looks promising!

“Today we talked about all the technologies that are changing how we use computers and access knowledge. We see devices working seamlessly together, exactly when and where you need them and with conversational interfaces that make it easier to get things done.”

The AR capabilities are already useful on phones and the magic will really come alive when you can use them in the real world without the technology getting in the way.

That potential is what gets us most excited about AR: the ability to spend time focusing on what matters in the real world, in our real lives. Because the real world is pretty amazing!

It’s important we design in a way that is built for the real world — and doesn’t take you away from it. And AR gives us new ways to accomplish this.

Let’s take language as an example. Language is just so fundamental to connecting with one another. And yet, understanding someone who speaks a different language, or trying to follow a conversation if you are deaf or hard of hearing can be a real challenge. Let's see what happens when we take our advancements in translation and transcription and deliver them in your line of sight in one of the early prototypes we’ve been testing”

Sundar PichaiCEO of Google

Augmented reality can break down communication barriers – and help us better understand each other by making language visible. Watch what happens when we bring technologies like transcription and translation to your line of sight. #GoogleIO ↓ pic.twitter.com/ZLhd4BWPGh

— Google (@Google) May 11, 2022

This article was written by Immersiv.io’s Dev Lab, composed of experienced AR developers, creating and implementing AR applications thanks to the latest technologies and dev kit on the market.

Special thanks to Maria!