NEWS

Lens Fest 2021: lights on the Snap's AR community

By Immersiv’s Dev Lab • 16 Dec 2021 • 6 min

From December 7th to December 9th, Snap. inc hosted its annual event: the Lens Fest. Three days of conferences and workshops, where Snap presented the new features brought to its AR creation tools, gave some more info on the Spectacles, and talked about its plans to help the AR community grow.

Lens Studio 4.10 is here, and the frontier between the digital and physical world have never been thinner

Lens Studio is the AR creation tool developed by Snap.Inc. With Lens Studio, you can build powerful augmented reality experiences that enhance the way we communicate. More than 200 Million people are using and engaging with lenses on Snapchat every day.

What’s a lens? It’s the name Snap gave to the AR experiences created on the Lens Studio: it goes from the famous face-filters used on Snapchat, to more complex experiences such as augmented art exhibits or even AR mini-games.

Over the last year, a suite of new features has been added to Lens Studio, allowing creators to bring their imagination to life.

Among the updates, Snap worked on the Machine Learning capabilities of the tool. There was already built-in machine learning in Lens Studio. It was used in features like Segmentation (being able to divide several parts of an image such as a human from the background in a photo) or the Skeletal (it takes the image of a moving object, like a human, and finds its joints and his head).

Skeletal feature on Lens Studio – courtesy of Snap

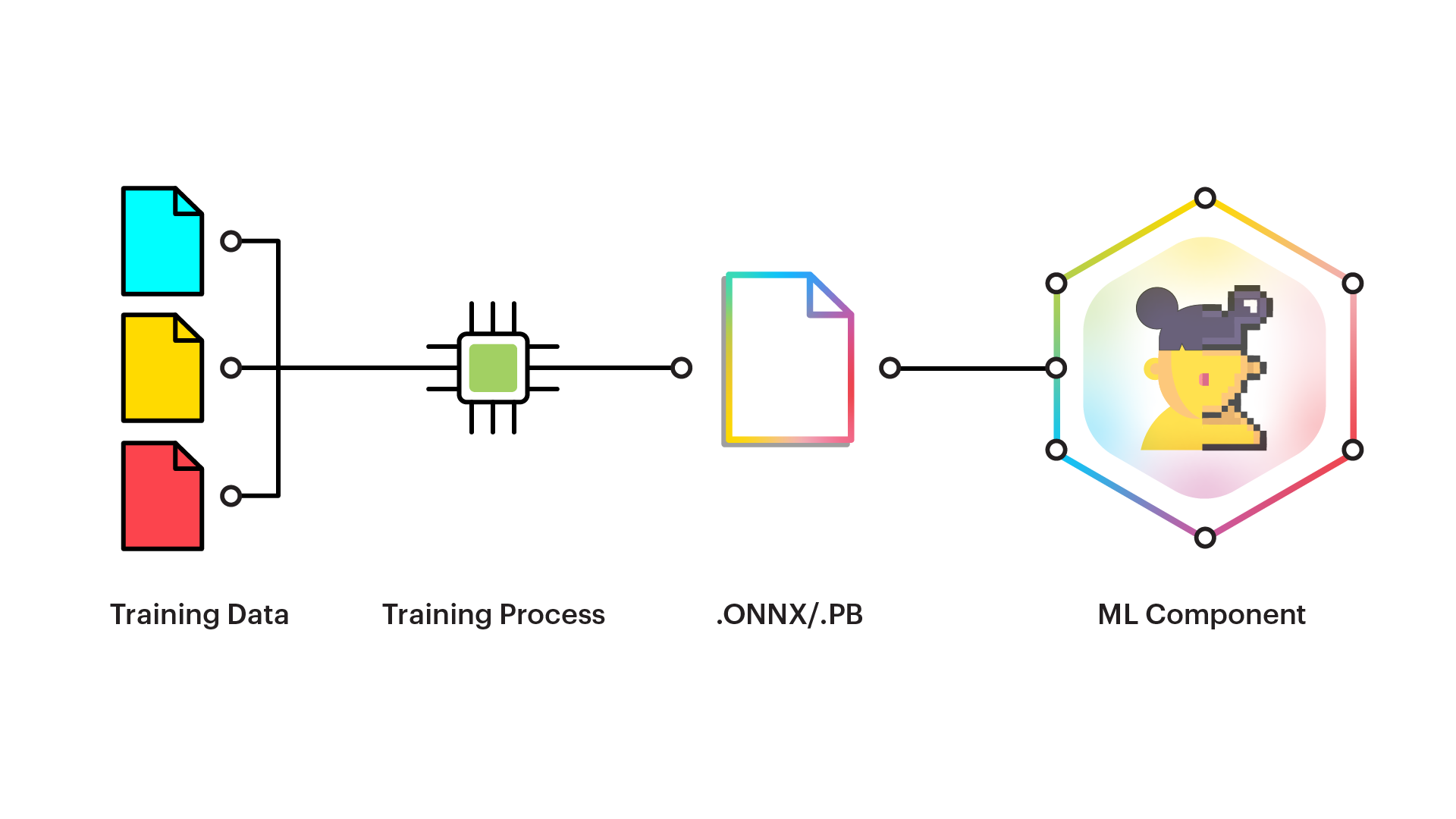

But what’s new since Lens Studio 3.0 is the “SnapML” which allows creators to add their own ML model to create their own features using ML. This applies to computer vision but also to “ML-based visual effects ». For example, we could imagine integrating a model from Immersiv’s data lab for field detection and superimposing our virtual field on top of it.

SnapML overview – courtesy of Snap

Lens Studio also now integrates 3D hands & body tracking and scans object recognition technology. These new features allow creators and developers to create more interactions between the lenses and the physical world.

Lens Studio 3D multi-body & hands tracking – courtesy of Snap

Making AR experiences more immersive is really a stone edge for Snap. Newly launched Lens Studio 4.10 integrates Sounds library from Snapchat, so creators can integrate the audio content into their lenses.

But the two most expected new features in Lens Studio are « Real World Physics », and « New World Mesh » features. The first one, allows creators to apply physic laws to AR elements, making them look more real than ever. But New World Mesh takes it a step further again, allowing lens creators to use depth information and world geometry understanding. The experiences created on Lens Studio now look like they are a part of the real world.

Lens Studio real-world physics – courtesy of Snap

Lens Studio world mesh and depth understanding – courtesy of Snap

Bringing augmented reality to the real world is one thing, but to create more immersive experiences, it has to go both ways. Thanks to a new API library, lens creators can now integrate real-world data like live stocks or weather into their experiences.

Lens Studio real-world data API – courtesy of Snap

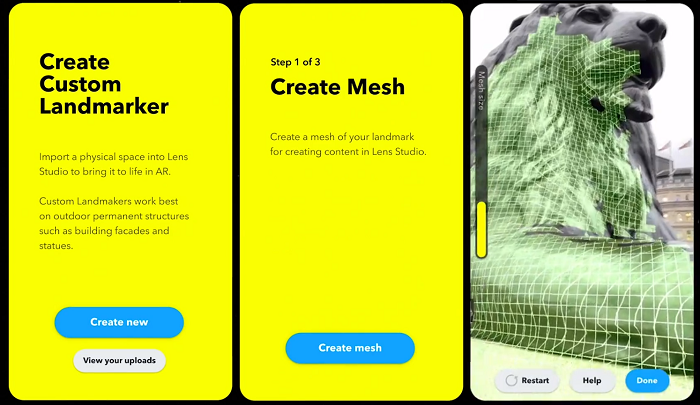

If landmarks were launched back in 2019, and local lenses in 2020, soon creators and developers will be able to build an AR experience mapped to your local landmarks with customs and landmarks right in your city, or even in your backyard! The tool initially offered 3 places (Buckingham Palace, Eiffel Tower, Flatiron Building, TCL Chinese Theater, US Capitol), and attached Lenses were proposed to the user when he was nearby one of these monuments. But in the new update, creators will be able to create their own Landmarker by directly scanning an object in the real world (a statue or a building for example) with Lidar, and then be able to superimpose virtual elements on top.

Lens Studio custom landmarkers – courtesy of Snap

All these new features are designed for creators to build the most entertaining, realistic & helpful experiences. But to make these experiences even more immersive, what’s best than living them on AR glasses like the Spectacles.

The Spectacles give you a glimpse at what life in the metaverse will look like

A few months ago, we wrote a piece on the Snap Partners Summit. But during the Lens Fest, Snap.Inc officially revealed more info on the features of its upcoming AR-compatible Spectacles: « We introduced Spectacles over five years ago as a fun, hands-free camera designed to help you capture your point of view and stay in the moment. Since then, we’ve taken an iterative, public approach to improve each generation and learning alongside the community. Now, our next-generation glasses (with AR display) have helped designers manufacture hundreds of Lenses that bring new perspectives to the things we do every day. »

Being able to live augmented reality experiences is a good thing, but being able to live these experiences with our friends and family is even more exciting! Snap announced that the Spectacles will allow people to use connected lenses so that they can interact together with the experience.

Connected lens on Spectacles – courtesy of Snap

The Spectacles will also embed location triggers, that will allow the user to experience location-attached lenses depending on the GPS radius (like a museum, a local neighborhood…).

As for now, the Spectacles aren’t commercialized. They are only accessible to developers and creators to iterate and experiment on AR creation and its actual limits. We are lucky to be counted among the lucky few who have been chosen by Snap to work on these next-gen AR glasses! Together, we are pushing back the limits of AR-creation.

Test on the Spectacles by Immersiv’s dev team

Augmented reality is inventing new ways for us to interact with each other and with the world around us. The challenge now is to keep creating to test and challenge the limits of the AR world and make it accessible to everyone.

Helping the AR creators to support the extension of the AR world

Snap’s strategy on creators’ community support is clear: innovation, recognition, monetization. Giving creators the means and the structure to innovate, offering them recognition for their work and talent, and of course helping them monetize their creations. To support this strategy, Snap developed several programs:

- the Spectacles Creators Program. Through this program, Snap partnered with dozens of creators around the world to explore new ways to fuse fun and utility through immersive augmented reality experiences.

- the Snap Lens Network. By joining this program, creators can benefit from direct contact to the Lens Studio team for technical and creative support! They will also get a verified account, a customizable Snapchat Profile (that can include their website link, email, and bio), access to beta builds of Lens Studio, networking opportunities and meet-ups with other creators, event and media collaboration opportunities, and revenue opportunities.

- the Creators Marketplace. Snap’s Creator Marketplace enables businesses to discover and partner with Snap’s creator community in a scaled and seamless way. A good way for creators to monetize their creations!

Earlier this year, Snap also introduced Ghost, their AR innovation lab for developers and small teams. Its mission: to explore the technical and creative limits of augmented reality. There are currently 20 ghost fellows, and they are working closely with snap engineers and designers to help them push back the limits of AR creation, and find new ways to deliver immersive experiences.

Finally, Snap announced the launch of a new AR content accelerator program for creators called “523,” which will provide support to “small minority-owned content companies and creators who traditionally lack access and resources. » Approved applicants will receive funding ($ 10,000 per month) and support from the Snap team to help them develop new augmented reality experiences.

This article was written by Immersiv.io’s Dev Lab, composed of experienced AR developers, creating and implementing AR applications thanks to the latest technologies and dev kit on the market.

Special thanks to Maria!